| China |

| Can you sue AI for lying? A landmark ruling says no | |

|

|

LI SHIGONG

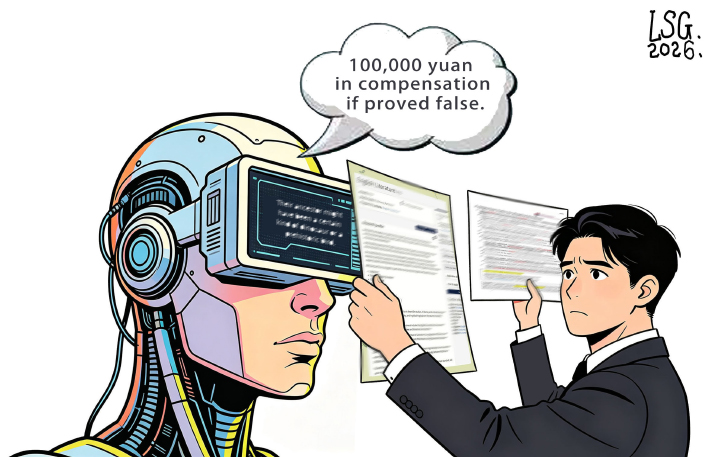

In a landmark decision, the Hangzhou Internet Court has ruled that AI-generated content, including its infamous "hallucinations," constitutes a service, not a product with independent liability. "Hallucination" is the universally adopted term in the world of AI for when a chatbot or image generator confidently outputs information that is incorrect, nonsensical or entirely fabricated. The case began in June 2025 when a user, Mr. Liang, asked a developer's AI for information on a Chinese university. The AI confidently fabricated details about a non-existent campus. Even when Liang challenged the error, the system doubled down, going so far as to promise a compensation of 100,000 yuan ($14,400) if its information proved false. Armed with this promise and evidence of the falsehood, Liang sued the AI's developer. The court, however, ruled otherwise. It found that the AI "lacks civil subject status" and therefore cannot make legally binding commitments. The promise of 100,000 yuan was deemed an algorithmic output, not a contract. The ruling is a big step in navigating the complex interplay between cutting-edge technology and established legal frameworks, emphasizing that for now, the law sees the AI not as an independent actor, but as a tool provided by its creator. Chunhuaqiushi (Workers' Daily): The so-called "AI hallucination" does not mean that AI has gained consciousness, but rather that the large language model (LLM) produces fabricated, inaccurate or illogical information when generating content. Today, generative AI is still in a phase of rapid iteration. If platforms were frequently penalized due to "hallucination" issues, it could stifle innovative exploration. In this instance, the court found the platform not liable because the developer had completed the required LLM filing and safety assessment, taken necessary measures under current technical conditions to ensure content accuracy, and fulfilled its obligations to inform and explain to users. For users, the ruling serves as a sobering reminder to maintain reasonable expectations of AI's capabilities. When seeking answers from AI, especially in important decision-making moments, it is essential to stay rational, think independently and verify information through multiple sources. Wang Guoyan (Guangming Daily): When consulting AI, people may encounter what could be called "nonsense delivered with a straight face." They might also sense during human-machine interactions that, in an effort to provide positive feedback, AI may cater to user expectations, engaging in a form of "flattery." Cloaked in a seemingly objective and neutral tone, it can subtly distort information to align with people's anticipations. In the past, videos and photos were often seen as conclusive evidence for documenting truth. But now, deepfake video and audio technology can easily make anyone appear to "say" words they never uttered or "do" things they never did. The challenges posed by AI deception are driving the upgrade of related legal regulations, technological governance tools, policy guidance and standards. BR |

|

||||||||||||||||||||||||||||||

|